Thoughts on data science, statistics and machine learning.

Misconceptions about OCR Bounding Boxes

Over the last year, I have been working on an application that auto-translates documents while maintaining the layout and formatting. It has many bells and whistles, from simple geometric tricks to sophisticated gen-AI algorithms and microservices. But basically, the app performs the simple task of identifying text in documents, machine-translating them, and reinserting them such that the output document “looks” like the input. Most documents that my app has to process are PDFs.

Essays of Revolt - Jack London

My company uses an e-HRM system. The system is why my colleagues and I never forget to wish each other on birthdays and anniversaries. Systems like these save us from the embarrassment of appearing indifferent. Other systems like smartphones ensure that the most halfhearted birthday greeting appears sincere and colourful. All you have to do is type “Happy” and the autocomplete does the rest - it composes the shortest message needed to show how much you care.

Book Review: The Great Arc - John Keay

This book invokes two very different reactions in me. The primary reaction is jubilant, almost romantic. The second is gloomy. Imagine you’re watching Oppenheimer: spirits rising until the point the bombs actually drop, after which you feel guilty about having felt good in the first place. The Great Trigonometric Survey was completed over the duration of a better part of a century, across three generations of mathematicians, physicists and surveyors (they were called compasswallahs - I finally see where Rohit Gupta gets his pseudonym), and at the cost of thousands of lives.

Book Review: A Room of One's Own - Virginia Woolf

I went into this essay expecting Virginia Woolf had written about what the eponymous room is like - its design and contents. But she deals with a more fundamental issue - that one needs a room of one’s own. The essays are a fine piece of scholarship. I’d never have thought that Woolf’s characteristic device, the “stream of consciousness” could be used to not only as a writing technique, but also as a powerful pedagogical technique.

Open World Games and the Myth of Sisyphus

To the memory of Kevin Conroy. There was only ever one true Batman. You have been playing for months. Slowly and steadily, you have harvested every collectable - making yourself stronger and stronger until you can kill the toughest enemies. Every enemy defeated, every monster slain. No side quest worth doing remains. Those not worth doing are also done because you are a completionist (which is a dignified way of saying that you have no life).

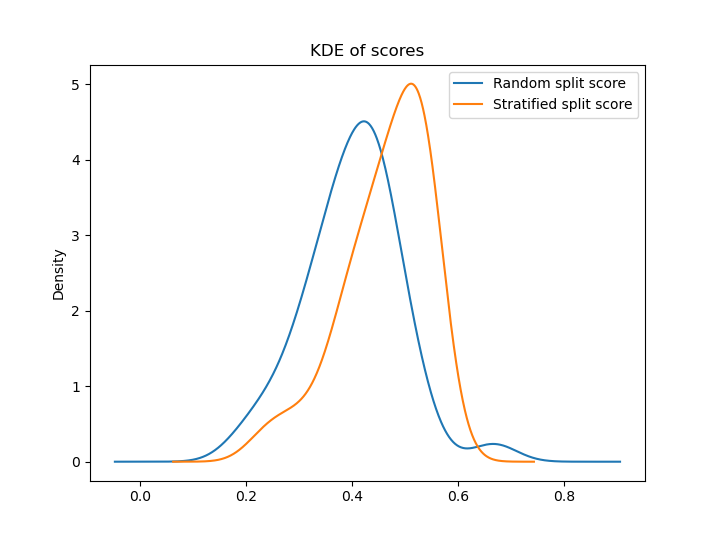

Effective Train/Test Stratification for Object Detection

TL;DR: Here’s a talk based on this post: There’s an unavoidable, inherent difficulty in fine-tuning deep neural networks, which stems from the lack of training data. It would seem ridiculous to a layperson that a pretrained vision model (containing millions of parameters, trained on millions of images) could learn to solve highly specific problems. Therefore, when fine-tuned models do perform well, they seem all the more miraculous. But on the other hand, we also know that it is easier to move from the general to the specific, than the reverse.

A Process for Readable Code

I took a course on data structures and algorithms over the last few months. It is being offered as a part of IIT Madras’ Online Degree Program in Data Science and Programming, taught by Prof Madhavan Mukund. The program is a MOOC in a true sense, with tens of thousands of students enrolling each year. The DSA course itself is offered every trimester, and sees an average of ~700 enrollments every time.

Book Review: A World Without Email - Cal Newport

This book is a good refresher on Cal Newport’s central thesis which shows up in both Deep Work and Digital Minimalism, but with email as the central device. The same essential theorem, but a lot of new stories to go with it as corollaries. Of course, it’s not email technology that the book contests, but the hyperactive hive-mind that are enabled by people’s email habits. But here’s the only thing I want to leave a note of: I was mildly annoyed by Newport’s invocation (or perhaps, misappropriation) of Claude Shannon’s information theory.

Book Review: Travels with Charley - John Steinbeck

It’s the end of 2020, and when you’ve been stuck at home for a year, with only your dog as your constant companion, Travels with Charley is a good book to read. But this book is a lot more about Steinbeck’s road trip than about the dog. Steinbeck romanticises everything. If so much as a tree sheds a leaf in front of him, he bursts forth with pages of ideas, thoughts and memories.

Book Review: Baluta - Daya Pawar

The original Marathi edition of Baluta has captivating cover art. It shows a sketch of a crow perched on the rim of an earthen pot, dropping pebbles in the pot, but completely oblivious to the glaring crack running down the pot. I remember that image from a copy lying in one corner of a bookshelf, but I was too young then to be interested. To me, this was just a sign of the artist trying to convey a sense of irony.